When Adobe Inc. released its Firefly image-generating software last year, the company said the artificial intelligence model was trained mainly on Adobe Stock, its database of hundreds of millions of licensed images. Firefly, Adobe said, was a “commercially safe” alternative to competitors like Midjourney, which learned by scraping pictures from across the internet.

But behind the scenes, Adobe also was relying in part on AI-generated content to train Firefly, including from those same AI rivals. In numerous presentations and public postsabout how Firefly is safer than the competition due to its training data, Adobe never made clear that its model actually used images from some of these same competitors.

Oh hey, look. The cycle of AI ingesting garbage output from another AI model has begun. This can’t possibly impact quality or reliability in any way /s

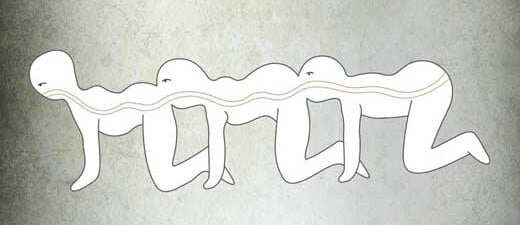

The AI centipede era has begun

Time to save the models we have now, cause they’ll never the quite the same.

deleted by creator

AI ingesting the output of AI ingesting the output of AI…

Isn’t this causing a huge degradation in quality? It’s like compressing an image over and over again. Those “AI” models can only generate things on what they know, and already have a very real issue of looking samey because of it. So if we train models on that, and then another model on the new model, and repeat this over and over again, we’d end up with less and less quality & variety for each model, no?

I suppose the AI images submitted are done so because they turned out good, so there’s still a human selection process there. It’s not as bad at automatically feeding random generated images into the training.

This actually leads to more conformist images with more errors, over time. Basically if an ai takes images from us, its gets loads of creativity, outputs less creativity, and more errors. So do they a couple of rounds and you indeed end up with utter crap.

why would they do this, doesn’t that reduce the quality of training dataset?

Depends how it’s done.

Full generative images would definitely start creating a copying error type problem.

However it’s not quite that simple. An AI system can be used to distort an image. The derivatives force the learning AI to notice different things. This can vastly extend the pool of data to learn from, and so improve the end AI.

Adobe obviously decided that the copying errors were worth the extended datasets.

We always thought the singularity is when our technology would take off advancing without us.

Maybe that moment when it decides it doesn’t need us will be a rapid disintegration by machine circle jerk.

They [the Golgafrincham] sent the B ship off first, but of course, the other two-thirds of the population stayed on the planet and lived full, rich and happy lives until they were all wiped out by a virulent disease contracted from a dirty telephone.

AI daisy chain. One AI output is another AI input.

- Garbage in -> Garbage out (x2)

- Garbage in (x2) -> = Garbage out (x4)

- Garbage in (x4) -> = Garbage out (x8)

- Garbage in (x8) -> = Garbage out (x16)

- …

Yea! Can you believe how long it took us to make garbage before all this?

Adobe said a relatively small amount — about 5% — of the images used to train its AI tool was generated by other AI platforms. “Every image submitted to Adobe Stock, including a very small subset of images generated with AI, goes through a rigorous moderation process to ensure it does not include IP, trademarks, recognizable characters or logos, or reference artists’ names,” a company spokesperson said.

Adobe Stock’s library has boomed since it began formally accepting AI content in late 2022. Today, there are about 57 million images, or about 14% of the total, tagged as AI-generated images. Artists who submit AI images must specify that the work was created using the technology, though they don’t need to say which tool they used. To feed its AI training set, Adobe has also offered to pay for contributors to submit a mass amount of photos for AI training — such as images of bananas or flags.